Essays and Conversations on Community & Belonging

Demolition by Design: How Social Media Breaks the Public Square

Social media's defenders point to experiments showing platforms don't change votes or persuade users. They're right about persuasion and wrong about the wreckage. Democracy doesn't just need voters—it needs deliberators, and people sense democracy is broken because they're experiencing a destruction experiments can't measure: the capacity for sustained, uncomfortable engagement that turns disagreement into productive discourse rather than tribal performance.

THE FRACTURED REPUBLICSYSTEMS & INCENTIVESDIGITAL LIFE & THE ATTENTION ECONOMYTHE ALGORITHMIC CAGEHIGH-BANDWIDTH SIGNALTHE ARCHITECTURE OF FRAGMENTATIONSOCIAL & POLITICAL COMMENTARY

Alex Pilkington

1/11/202613 min read

The Wrecking Ball is Architectural, Not Just Political

Dan Williams's Asterisk essay is the most rigorous empirical challenge to the "social media broke democracy" narrative I've encountered. He marshals randomized field experiments, cross-national comparisons, historical precedent, and decades of media effects research to argue that blaming social media for America's epistemic crisis is fundamentally misguided. His case is compelling, data-rich, and methodologically sophisticated.

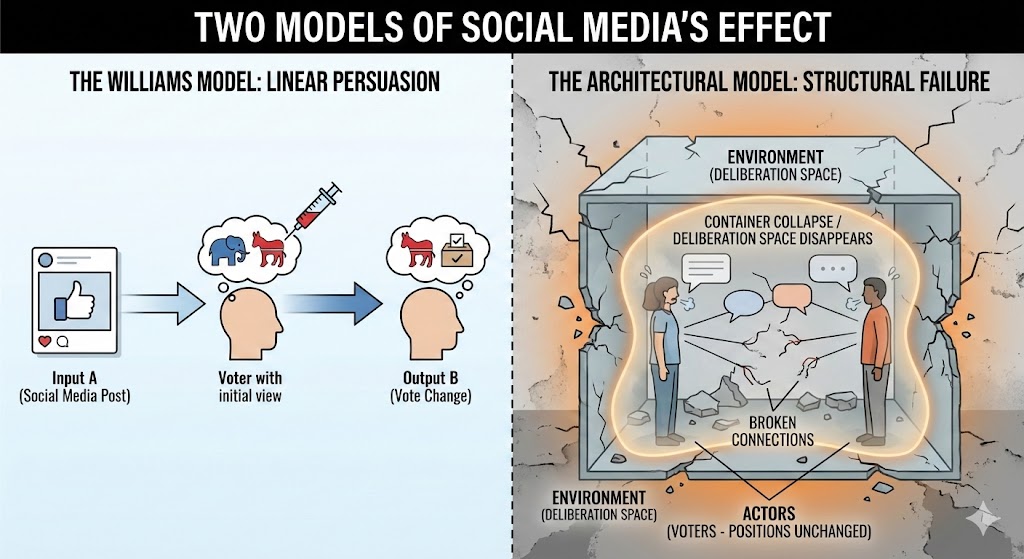

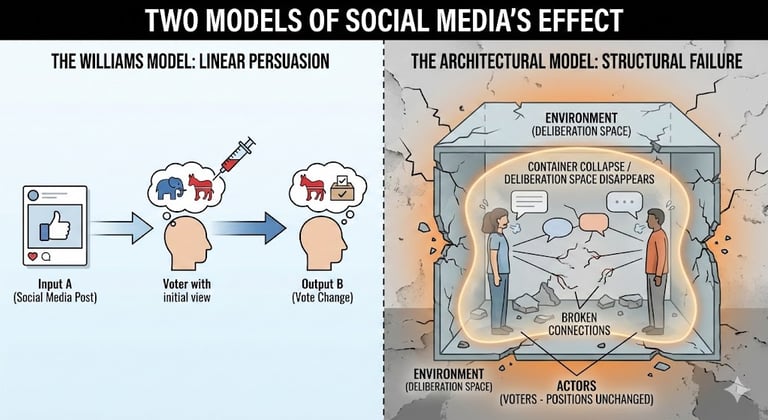

Yet it rests on a fundamental category error: Williams measures persuasion when he should be measuring architecture.

The question isn't whether Facebook changed your vote—it's whether Facebook destroyed the environment where meaningful deliberation can occur at all. This isn't a quibble about emphasis. It's the difference between asking "Did this poison kill you?" and "Did this poison make it impossible to breathe?" The answer to the first question might be no. The answer to the second determines whether we're having this conversation at all.

What Williams Gets Right

Before challenging Williams's framework, I need to acknowledge what he establishes convincingly:

The experimental evidence is striking. The Facebook/Instagram Election Study represents the gold standard for causal inference. When Nyhan's team reduced users' exposure to like-minded sources, when Guess's team switched users to chronological feeds, when Allcott's team deactivated accounts for six weeks—none produced meaningful changes in political attitudes, polarization, or voting behavior. These aren't marginal studies with questionable designs. They're large-scale, pre-registered, randomized experiments with precisely estimated null effects.

The historical argument is well-taken. Williams is right that epistemic dysfunction isn't new. The Salem witch trials, McCarthyism, tobacco industry propaganda, Iraq WMD failures—America has always struggled with truth. Uscinski's research showing no increase in conspiracy theorizing over time challenges the assumption that we're in unprecedented epistemic territory.

Cross-national variation matters. If social media were the primary driver, countries with similar adoption rates should show similar outcomes. Instead, half the OECD countries studied by Boxell showed decreasing affective polarization during the social media era. The platforms are the same; the outcomes diverge.

Selective exposure is real. Budak's research on low exposure to false content among most users, concentrated among a motivated fringe, challenges the "everyone's in a rabbit hole" narrative. Altay's finding that the strongest predictor of misinformation panic is the "third-person effect"—assuming others are more gullible than you—reveals how our intuitions mislead us.

Media effects are genuinely limited. Decades of research confirm that political persuasion is hard, propaganda often fails, and people aren't blank slates. The minimal effects of political advertising, even at scale, align with what Williams's experiments show.

These findings deserve to be taken seriously. Williams has done the field a service by synthesizing this evidence and challenging lazy technological determinism.

And yet—the case collapses when we examine what these experiments actually measure.

The Category Error: What Experiments Can't Capture

Williams's entire framework depends on attitude change as the outcome variable. Did exposure to different content change your views? Did deactivating your account reduce polarization? Did removing algorithmic curation shift your voting intentions?

The consistent answer is no. But these experiments are testing whether social media is a persuasion machine when the actual concern is that it's a deliberation destroyer.

Consider what the Allcott study actually shows: deactivating Facebook for six weeks doesn't change your political attitudes. Williams treats this as exonerating. But the study also found that deactivation increased subjective well-being, reduced anxiety and depression, and changed how participants spent their time. Users reported feeling better—they just didn't change their minds about politics.

This reveals the deeper question: what if social media's harm isn't that it changes what you think, but that it changes what kind of thinker you can be?

The experiments Williams cites measure:

Vote choice

Candidate favorability

Affective polarization

Political knowledge

Perceived election legitimacy

What they don't—can't—measure:

Your capacity for sustained attention after a decade of infinite scroll

Your tolerance for cognitive dissonance after years of algorithmic validation

Your ability to extend interpretive charity after thousands of context-collapsed arguments

Your skill at perspective-taking after endless identity performance

Your patience for deliberation after being conditioned for hot takes

A six-week experiment measuring vote choice is like testing whether smoking for a month causes lung cancer. The null result doesn't vindicate cigarettes—it reveals the research design's limitations.

Williams acknowledges this objection in passing, noting experiments "only examined effects over a relatively short (three-month) period." But he treats this as a caveat rather than a fatal flaw. The entire case rests on evidence that is structurally incapable of detecting the harms at issue.

Architecture, Not Content

Williams focuses relentlessly on content effects: does encountering different posts change your views? But the crisis isn't about content—it's about the container.

Social media platforms don't just deliver information differently than newspapers or television. They restructure the fundamental grammar of public discourse. This restructuring happens at the architectural level, independent of whether any particular post persuades you of anything.

The mechanics of context collapse

In analog environments, discourse occurs in bounded contexts. You argue about politics at a dinner party with friends, in a town hall with neighbors, in a letter to the editor constrained by word limits and editorial standards. Each context carries implicit norms about tone, evidence, and charity. You self-censor not because you're censored, but because the social context demands it.

Social media demolishes these boundaries. The same tweet is simultaneously addressed to your friends, your enemies, potential employers, strangers who hate you, and journalists looking for outrage. This isn't a bug—it's the architecture. The platform optimizes for broadcasting, not conversation.

The result isn't that you're persuaded differently. It's that the very possibility of persuasion—understood as reaching mutual understanding through dialogue—becomes structurally impossible. You're not talking to someone; you're performing for everyone.

The inversion of social penetration

Altman and Taylor's social penetration theory describes how relationships form through gradual trust-building. You meet someone, establish superficial commonalities, slowly broach more sensitive topics as trust develops. This process creates the relational substrate necessary for disagreement to remain productive.

Social media inverts this entirely. Platforms don't reward the slow building of relationships—they reward immediate tribal signaling. The algorithm amplifies high-arousal content because engagement drives revenue. You don't lead with commonality and gradually reveal disagreement; you lead with disagreement because that's what gets attention.

Williams would respond: but the experiments show people aren't easily manipulated by algorithmic content! Exactly. Because they're not being persuaded—they're being sorted. The algorithm doesn't change your mind; it connects you with others who already think like you and amplifies the content that best signals your tribal identity.

This is why Boxell's finding that polarization increased most among elderly Americans who don't use social media doesn't exonerate platforms. The sorting happens on social media, but its effects ripple outward. When elite discourse is increasingly shaped by Twitter dynamics, when cable news chases viral social media content, when politicians optimize for engagement metrics—the architectural effects spread beyond the platforms themselves.

Why the Diploma Divide Actually Proves the Point

Williams's explanation of asymmetric polarization through the "diploma divide" is compelling: Republicans have become the party of non-college-educated voters who rightly perceive elite institutions as hostile. This creates receptivity to anti-establishment information sources unconstrained by professional norms.

But consider how this mechanism interacts with platform architecture. Before social media, working-class conservatives felt alienated from elite institutions. This alienation found expression through talk radio and eventually Fox News. But these outlets—however biased—still operated under some editorial constraints, still had to maintain basic credibility, still existed within a broader media ecosystem with overlapping norms.

Social media removes all such constraints. It doesn't just give working-class conservatives a voice—it gives them an infinite choice of alternative epistemic universes, each algorithmically curated to validate their alienation and insulate them from contradictory evidence.

Williams notes that platforms don't run different algorithms for conservatives and liberals. But that's precisely why architecture matters. The same algorithm, applied to populations with different levels of institutional trust, produces radically different outcomes:

For users who trust mainstream institutions, the algorithm surfaces mainstream content, which reinforces that trust.

For users who distrust mainstream institutions, the algorithm surfaces counter-institutional content, which validates that distrust.

The architecture is neutral. The effect is amplifying. And when you're amplifying distrust, you're destroying the preconditions for democratic deliberation.

This is why Williams's own evidence about the diploma divide undermines rather than supports his defense of platforms. If the fundamental problem is a "crank realignment" where conspiracy-minded, anti-institutional thinking concentrates on one side, then a communication architecture optimized for viral conspiracy theories, algorithmic rabbit holes, and the systematic bypass of editorial standards isn't neutral infrastructure—it's an accelerant.

Yes, the alienation came first. But would it have metastasized into wholesale rejection of shared reality without platforms that make such rejection structurally sustainable?

What Historical Precedent Actually Shows

Williams's historical argument—that epistemic dysfunction isn't new—cuts both ways. Yes, America has always had conspiracy theories, propaganda, and political lies. But the mechanism through which these spread matters profoundly for their societal impact.

Consider Williams's own examples:

McCarthyism involved centralized propaganda from political elites, spread through traditional media that still operated under some editorial constraints. When McCarthy's claims were discredited at the Army-McCarthy hearings, broadcast nationally on television, the movement collapsed. There was a mechanism for correction.

Tobacco industry propaganda was sophisticated and long-lasting, but it operated within an institutional environment that eventually adjudicated the dispute. Courts, scientific panels, and regulatory agencies—however slowly—converged on truth. The system was flawed but functional.

Iraq WMD failures represented elite media and government dysfunction, but the lies were eventually exposed and documented through traditional investigative journalism. Again—too late, but still functional.

The key difference isn't that pre-social-media America lacked epistemic dysfunction. It's that pre-social-media America retained institutional mechanisms—however imperfect—for correction and convergence.

Social media doesn't just bypass these mechanisms; it makes them structurally obsolete. When every user can access infinite alternative sources curated to validate their priors, there's no mechanism for convergence at all. The system isn't just flawed—it's non-functional by design.

This is why Uscinski's finding that conspiracy theorizing hasn't increased in prevalence misses the point. The question isn't quantity—it's quality and consequence. Pre-internet conspiracy theories were believed by fringe groups with limited reach. Post-internet conspiracy theories can command the allegiance of sitting presidents and shape major party platforms.

The difference isn't how many people believe false things. It's whether false beliefs can be socially contained or whether they can achieve political escape velocity.

The Persuasion Evidence Actually Supports the Architecture Thesis

Williams presents decades of research showing political persuasion is hard and media effects are minimal. He treats this as evidence against social media's impact. But this research actually supports the architectural critique.

If persuasion is genuinely difficult—if people aren't blank slates easily manipulated by content—then how do we explain the dramatic changes in Republican elite discourse over the past decade? How did we go from McCain rejecting anti-Obama conspiracy theories at town halls to Trump building his political identity around birtherism to the majority of Republicans believing the 2020 election was stolen?

The standard persuasion model can't explain this. Republicans weren't gradually convinced by clever arguments. Instead, the social media environment made it possible for pre-existing resentments and conspiracy-minded thinking to sort, cluster, and achieve political dominance without ever having to win over skeptics.

This is why selective exposure matters more than Williams acknowledges. Yes, Budak shows that most users have low exposure to false content. But the motivated fringe that seeks out such content isn't random—they're politically engaged, they vote in primaries, they demand ideological purity, and they can now find each other, coordinate, and exert disproportionate influence on party direction.

Before social media, these voters existed but were dispersed and disorganized. After social media, they're networked and mobilized. The platform didn't persuade them—it enabled them.

Williams cites Altay's observation that people "jump in and dig" rather than passively falling into rabbit holes. But this describes mechanism, not innocence. The question is: could they dig as effectively before? Could a conspiracy-minded person in rural Ohio in 1995 find hundreds of like-minded individuals, access thousands of hours of conspiracy content, and coordinate political action without ever encountering contradictory evidence?

The architecture made the jumping and digging possible. That's not exoneration—it's indictment.

The Third-Person Effect and the Reality of Loneliness

Williams leans heavily on Altay and Acerbi's finding that alarmism about misinformation correlates with the "third-person effect"—assuming others are more gullible than you. He implies this reveals that misinformation panic is overblown.

But this finding is entirely consistent with the architectural critique. I'm not worried that I'll be persuaded by false content on social media. I'm worried that ten years of platform use has degraded my attention span, my capacity for nuance, my patience for sustained argument, and my tolerance for the discomfort necessary for genuine learning.

I'm worried that even as I retain my critical thinking about individual posts, the cumulative effect of thousands of context-collapsed interactions has made me worse at the kind of thinking democratic citizenship requires.

I'm worried that my relationships with people who disagree with me politically have become brittle and unproductive not because they were persuaded by propaganda, but because we no longer have the shared conversational norms necessary to navigate disagreement constructively.

These aren't third-person concerns. These are first-person experiences that millions report but that Williams's experiments can't measure.

Consider the null effects on "political attitudes" in the deactivation studies. What does this actually tell us? That six weeks off Facebook doesn't make you switch parties? That's not the question. The question is whether chronic Facebook use has changed what kind of conversational partner you can be, what kind of friend, what kind of neighbor, what kind of citizen.

The Allcott study actually gestured at this: deactivation increased subjective well-being and reduced anxiety and depression. Users felt better. They were happier. They had more time. But they didn't change their politics, so Williams treats the platforms as exonerated.

This is backwards. The fact that people feel worse, are more anxious, are more depressed, and have less time when using platforms should be central to assessing democratic health. Democracy requires not just voting but sustained engagement—which requires citizens who aren't exhausted, anxious, and time-starved.

High-Bandwidth vs. Low-Bandwidth Communication

Williams asks for a more persuasive case. Here it is: You cannot build a high-trust society on low-bandwidth communication.

Bandwidth isn't about information volume—it's about the richness of context required for meaning-making. Face-to-face conversation is high-bandwidth: you observe facial expressions, hear tone, see body language, remember past interactions, and operate within shared social constraints that make certain moves illegitimate (like simply walking away mid-sentence).

Social media is low-bandwidth: you see text, maybe an avatar, and nothing stops you from scrolling past anything uncomfortable. More importantly, the architectural constraints that force mutual engagement in physical space—the fact that you have to see the same people tomorrow, that you share institutions, that you can't selectively curate your entire social reality—are absent online.

This matters because trust isn't built through information exchange. Trust is built through repeated interaction under conditions that make defection costly. Physical communities create these conditions automatically: reputational consequences are unavoidable. Digital communities don't: you can always find a new community that validates you.

Williams's experiments show that exposure to different content doesn't change attitudes. But trust-building was never about exposure to content—it was about sustained relationship under conditions that force accountability.

This is why the Boxell finding about polarization among elderly Americans doesn't exonerate platforms. The elderly aren't using social media, but they're living in a world where institutions have been hollowed out by decades of declining social capital—a decline that predates social media but has been catastrophically accelerated by it.

Robert Putnam's "Bowling Alone" documented the decline of associational life starting in the 1960s. But what sustained social capital through the 1990s was necessity: you still needed physical communities for information, entertainment, and coordination. The internet removed that necessity. Social media turned its removal into a business model.

Now we don't just have the option to avoid physical community—we have algorithmic incentives to replace it with digital simulation. The result isn't that people are persuaded differently. It's that they never develop the capacity for the kind of engagement that makes democratic deliberation possible.

What About Everything Else?

Williams emphasizes that platforms are diverse and evolving, making generalizations difficult. Fair point. But some architectural features are universal:

All major platforms optimize for engagement over accuracy

All create context collapse by mixing bounded social spheres

All enable one-to-many broadcasting that inverts relationship formation

All remove the friction that forces mutual engagement in physical space

All offer infinite content, enabling complete curation of epistemic reality

These features don't require persuasion to harm democratic deliberation. They harm it by making deliberation architecturally impossible.

Williams also notes that experiments are limited—they can't test wholesale platform removal over decades. Absolutely right. But this uncertainty cuts both ways. He wants to maintain "considerable uncertainty" while also claiming "moderately strong confidence" that social media isn't the primary driver of epistemic crisis.

But consider the asymmetry: If we're wrong and social media isn't the culprit, we've over-diagnosed a secondary factor. If Williams is wrong and social media is the culprit, we've missed the primary driver of democratic breakdown while it continues to metastasize.

The precautionary principle matters here. Williams wants rigorous evidence before we "blame" social media. But democratic deliberation isn't a luxury good we can afford to lose while awaiting definitive studies. It's infrastructure. And infrastructure failures require action under uncertainty.

The Real Fix Isn't Algorithmic

Williams concludes that "no amount of platform regulation can fix" America's deep-seated divisions. I agree—but not for his reasons.

Platform regulation can't fix the problem because regulation addresses content when the problem is architecture. Better moderation won't restore high-bandwidth communication. Algorithm reform won't rebuild local associational life. Content policy won't revive the institutions that previously mediated between individual and society.

The solution isn't better platforms. It's recognizing that digital tools excel at conveyance—moving information—but catastrophically fail at convergence—building shared understanding. They're efficient for coordination but disastrous for deliberation.

This is why "touch grass" isn't anti-intellectual retreat—it's structural necessity. Democratic deliberation requires high-bandwidth interaction: face-to-face engagement, sustained over time, within communities that impose reputational costs for bad faith and reward good faith with trust.

Social media didn't just change how we discuss politics. It destroyed the Constitution of Knowledge—Jonathan Rauch's term for the slow, deliberative, institutionally embedded process through which free societies distinguish truth from falsehood. It replaced this with a market for attention where engaging content wins regardless of truth.

Williams's experiments show that social media doesn't persuade. But persuasion was never the mechanism of healthy democratic discourse. Understanding was. Convergence was. Trust-building was. These require architecture that social media structurally cannot provide.

Conclusion: Living in the Ruins

Williams asks why people believe social media broke democracy when the data doesn't support it. The answer is that they're experiencing the absence of what's been destroyed.

They remember when neighbors with different politics could discuss school board issues productively. They notice that online arguments never resolve, only escalate. They feel the exhaustion of constant performance. They experience the loneliness of algorithmically curated feeds that simulate community while delivering isolation.

These aren't third-person effects or confirmation bias. They're the lived reality of what happens when you replace high-bandwidth deliberation with low-bandwidth broadcasting.

Williams's evidence shows social media doesn't change what people believe. I'm arguing it's changed what kind of believers they can be—and therefore what kind of democracy we can sustain.

The wrecking ball didn't just hit the Capitol. It hit our capacity for the sustained, uncomfortable, high-friction engagement that democratic self-governance requires. We're living in the ruins of that capacity, surrounded by people who still have their opinions but have lost the ability to deliberate about them together.

That's not a persuasion problem. That's an architecture problem. And no amount of experimental evidence about attitude change will measure what's been destroyed.